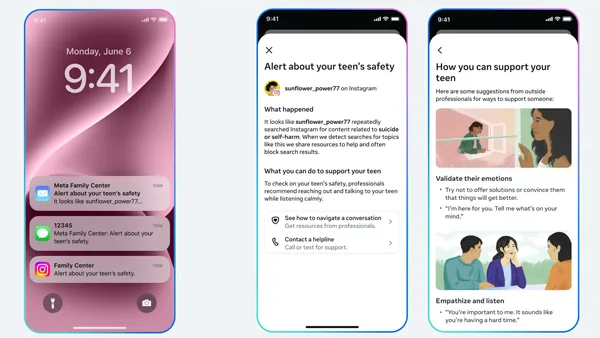

Instagram is introducing a new safety feature aimed at supporting parents of teenagers who use the platform. The update will notify a parent or guardian if their teen repeatedly searches for content related to suicide or self-harm within a brief period of time.

Once alerted, parents will have the option to view guidance and resources designed to help them start a thoughtful and supportive conversation with their child about mental health. The feature will begin rolling out next week to users enrolled in parental supervision in the United States, the United Kingdom, Australia, and Canada, with additional countries expected to follow.

In a blog post outlining the change, Instagram said it has set a threshold that requires multiple related searches over a short span before triggering a notification. The company acknowledged that this cautious approach could occasionally result in alerts even when there is no immediate danger. However, it stated that experts agree prioritizing early awareness is an appropriate starting point. Instagram added that it plans to evaluate feedback and adjust the system if necessary.

The company also emphasized that, under its existing policies, search results tied to suicide and self-harm are restricted for younger teens, and such content is not surfaced to them on the platform.

Additionally, Instagram shared that it is developing a comparable parental alert system for its AI-powered features. More details about that expansion are expected later this year.